Perspective on Risk - Sept. 12, 2023 (A.I.)

Our Priors Were Wrong; Ready To Admit Defeat? If You Can’t Beat Them...; Next Generation Is Around The Corner; The Man In Black Pink; AI & Financial Crisis; Board Oversight; AI Model Risk; Wonish

Maybe Our Priors Were Wrong

Our priors on artificial intelligence models may be all wrong. In my mind, I pictured AI models as being all-knowing about facts, and quite good at sophisticated mathematics.

Instead what we are seeing with the advent of large-language models (LLMs) is that AI models excel at creativity. Witness the ability to generate art of Midjourney and the like. They are also great at brainstorming and idea generation. Witness:::::::

There is no one definition of creativity, but researchers have developed a number of flawed tests that are widely used to measure the ability of humans to come up with diverse and meaningful ideas. The fact that these tests were flawed wasn’t that big a deal until, suddenly, AIs were able to pass all of them. But now, GPT-4 beats 91% of humans on the a variation of the Alternative Uses Test for creativity and exceeds 99% of people on the Torrance Tests of Creative Thinking. We are running out of creativity tests that AIs cannot ace. (Automating Creativity on oneusefulthing Substack). The WSJ even wrote about this in M.B.A. Students vs. ChatGPT: Who Comes Up With More Innovative Ideas?

Reading these studies, it seems like there are a few clear conclusions:

AI can generate creative ideas in real-life, practical situations. It can also help people generate better ideas.

The ideas AI generates are better than what most people can come up with, but very creative people will beat the AI (at least for now), and may benefit less from using AI to generate ideas. One study estimated that GPT-4 ranged from the 93rd to the 99th percentile on the Torrance Tests of Creative Thinking.

The vast majority of the best ideas in the pooled sample are generated by ChatGPT and not by the students.1

There is more underlying similarity in the ideas that the current generation of AIs produce than among ideas generated by a large number of humans

The Crowdless Future? How Generative AI Is Shaping the Future of Human Crowdsourcing

This study investigates the capability of generative artificial intelligence (AI) in creating innovative business solutions compared to human crowdsourcing methods. …

Results showed comparable quality between human and AI-generated solutions. However, human ideas were perceived as more novel, whereas AI solutions delivered better environmental and financial value.

Finally, the next step may be a quantum improvement in coordination. What are we humans quite bad at? Meetings! Sharing information! But AIs should be able to do this well and quickly. Rather than summarizing findings into quaint PowerPoints with cute little graphics, AIs will be able to pass vast data, and query each other back and forth. An LLM ‘solves’ its answers by calculating a set of vector weights that can easily be passed to each others. This increase in the speed of machine-to-machine coordination may be the next frontier.

Are We Ready To Admit Defeat?

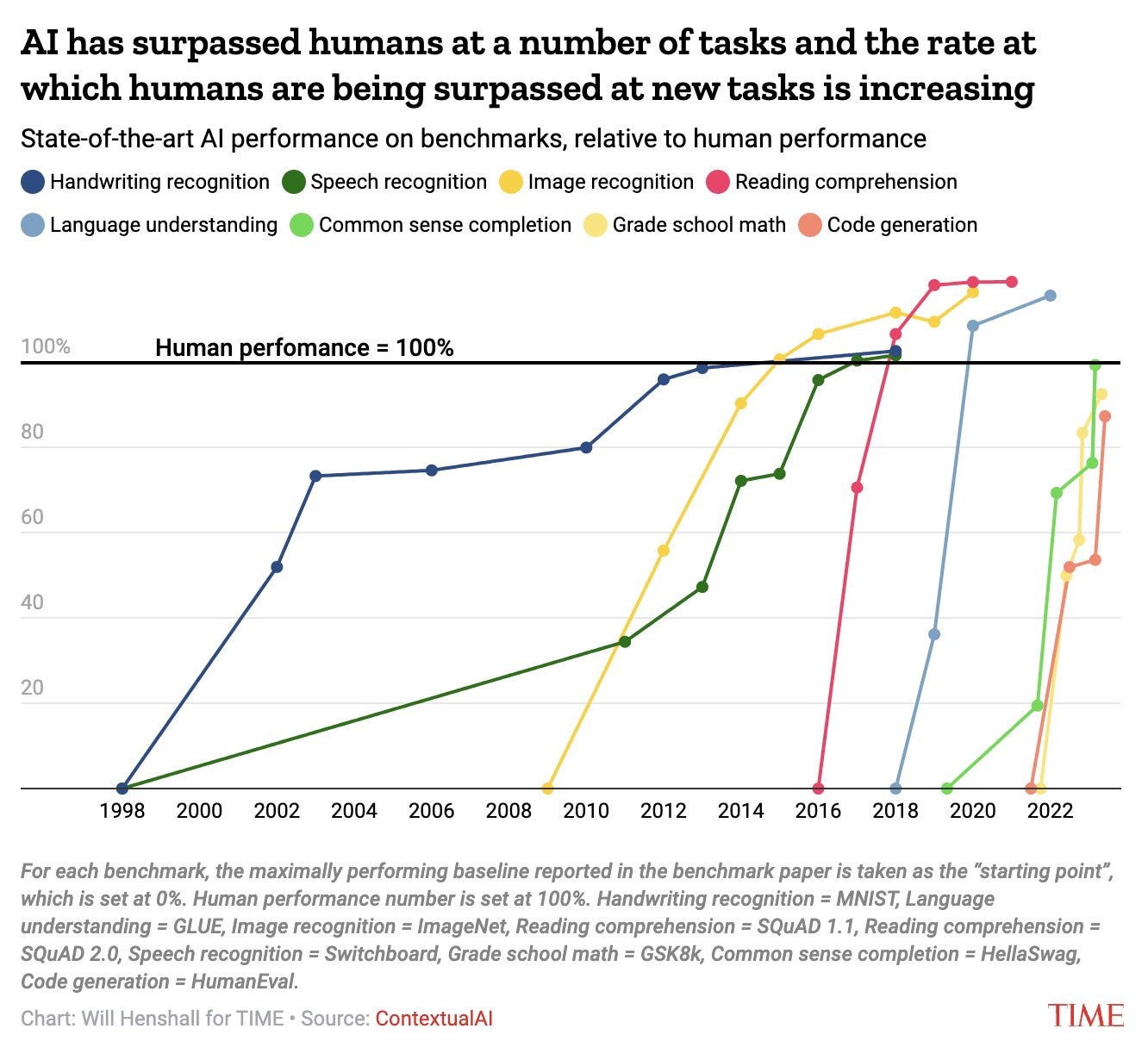

AI Is Getting Faster At Surpassing Human Performance

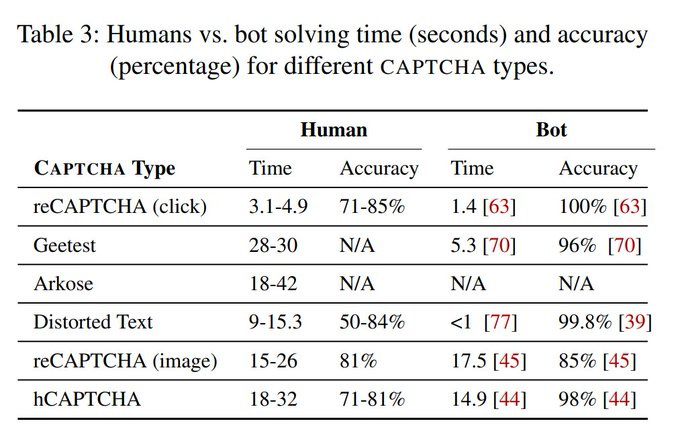

AI Solves CAPTCHAs Better Than Humans

An Empirical Study & Evaluation of Modern CAPTCHAs

For nearly two decades, CAPTCHAs have been widely used as a means of protection against bots. … In this work, we explore CAPTCHAs in the wild by evaluating users' solving performance and perceptions of unmodified currently-deployed CAPTCHAs.

Every major AI nails the Voight-Kampff test.

You’ve all probably seen Bladerunner. In the movie, the Voight-Kampff test was used by the LAPD to determine whether or not an individual was a replicant. It typically took twenty to thirty cross-referenced questions to detect a replicant. You can take the test to see if you are a replicant or human. All of the major AIs now pass the test, many in colorful ways.

LLMs Are Getting Better At Creating Their Own Prompts

LARGE LANGUAGE MODELS AS OPTIMIZERS

We embark on employing LLMs as optimizers, where the LLM progressively generates new solutions to optimize an objective function. … For prompt optimization, optimized prompts outperform human-designed prompts on GSM8K and Big-Bench Hard by a significant margin, sometimes over 50%.

A Picture Tells 1,000 Words

If You Can’t Beat Them, Have Them Join Us?

Incorporating A.I.s Into Teams (Is Not Easy)

This article studies the effects of the adoption of artificial intelligence on teams and their performance and coordination in a laboratory experiment. We posit that automation decreases organizational performance, interferes with team member coordination, and leads to behavioral changes in human co-workers. … We demonstrate experimentally that even in a task where AI outperforms humans, the replacement of a human player by an automated videogame agent decreases team performance. We also find that automation leads to an increase in coordination failures, and reduces team trust and individual effort provision. Finally, we explore the distributional consequences of introducing AI within teams and show that the performance effects are especially large in the short-term and in low- and medium-skilled teams whose skills we pre-tested. Overall, our team-based design supports a perspective that collaborative human-machine interaction is key to the positive transformation that AI may bring to teams, organizations, and work more broadly.

BP: I worry with this study that they had the answer before doing the work.

The Next Generation Of Models Is Just Around The Corner

The Man In Black Pink

Ever wanted to hear Johnny Cash sing Barbie Girl? You do now. SERIOUSLY

AI & Financial Crisis

AI will be at the center of the next financial crisis, SEC chair warns (Axios)

A.I. models may put companies’ interests ahead of investors’

Who is responsible if generative A.I. gives faulty financial advice?

I worry about things like redlining and discrimination. As the laws are written now, there is a disincentive for firms to look for discrimination in the results of their models. Some version of a ‘safe harbor’ for firms that actively look for and remediate discrimination would be useful.

The Bank of England published an interesting paper that will be useful for both internal AI model validation as well as supervisory review: Deep learning model fragility and implications for financial stability and regulation (Bank of England)

In this paper we examine a variety of these models and conduct simulations on two tasks: a) Predicting credit card default and b) predicting stock returns.

We find that models display high degrees of commonality when it comes to their predictions but less in their feature importance sets. Using the state-of-the-art SHAP values for feature importance, we find a curious result where model creators would get similar predictions but the explanations for arriving at the predictions would be very different. The results suggests the presence of a Rashomon effect. For areas where high degree of consistency and explainability is required it might be more prudent to use simpler explainable models.

The presence of a high degree of prediction commonality does not imply that these models are duplicates of each other. From our regressions we conclude that even with subtle changes to model architecture, we can get variation in model explanations. One would assume that repeated iteration over the dataset would lead to a point where all different architectures’ predictions and explanations become duplicated. Inductive biases can significantly shift DL models’ behaviours and reasoning.

Our results have several implications for regulation and financial stability. Due to the Rashomon effect and getting different explanations we could see consumer trust being affected. The presence of different explanations presents a morally hazardous scenario where model developers could choose DL models with explanations that might be appealing to their risk and governance mechanisms. If the governance mechanisms are unaware of DL model fragility they might underweight the risk of adverse outcomes under different scenarios.

Finally, our results highlight that a model with variable explanations could be hard to debug, as there is no certainty that efforts to rectify flaws will in fact lead to desired changes.

Board Oversight of Artificial intelligence

Board Practices: Artificial intelligence

This post presents findings from a survey of members of the Society for Corporate Governance that focused on aspects of AI, including where in the organization AI resides, use policies/framework, risk mitigation measures, education and training, and board oversight.

A useful oversight of the current state of play across many industries; financial services was 25% of the sample firms. More detail from Deloitte here.

Few firms have AI policies or formal oversight. In general even for large firms things appear ad-hoc.

Not too comforting.

AI Model Risk Management

A new paper is out that applies Federal Reserve and OCC model risk management (MRM) guidance to the problems in HR functions.

A New Approach to Measuring AI Bias in Human Resources Functions: Model Risk Management

Unbeknownst to many, the regulation of employment in the United States is historically rooted in voluntary compliance.

Consistent with this approach, companies using or seeking to use AI for employment decisionmaking should also strive for internal compliance. … By implementing a robust MRM framework as a form of voluntary compliance, employers can have confidence that they will be able to use AI tools to fulfill and support HR functions.

I’ve long argued for a ‘safe-harbor’ for firms that are actively looking at their models for discriminatory behavior.

In the absence of legislative or regulatory change—neither of which appear to be coming in the coming years—the introduction of MRM as a countermeasure to unintentional non-compliance with federal civil rights laws is undergirded by federal administrative law principles. The voluntary compliance measures that are embodied by MRM as laid out above fit neatly into federal administrative law and Title VII.

An employer’s MRM implementation would not only prevent discrimination, but in the event of automated discriminatory employment decisions occur, remedial action can be immediately taken, preventing the need for EEOC intervention.

However, once a charge is filed with the EEOC, an MRM framework could also favorably affect the outcome of the EEOC investigation, especially if the parties engage in early voluntary mediation. MRM would be highly probative in an EEOC investigation that will endeavor to secure evidence that would determine whether there is a “reasonable cause to believe that the charge [alleging discrimination] is true.” Through MRM, the employer would have ample evidence to show the EEOC that it not only complies with the law, it invested in proactive steps to ensure that discrimination did not occur. This direct evidence of compliance would likely ensure the EEOC that the employer goes above and beyond to ensure good-faith compliance with federal employment law.

Wonkish Stuff

On Forecasting Cryptocurrency Prices: A Comparison of Machine Learning, Deep Learning, and Ensembles

Researchers have proposed predictors based on statistical, machine learning (ML), and deep learning (DL) approaches, but the literature is limited … because it focuses on predicting only the prices of the few most famous cryptos … compares different models on different cryptos inconsistently, and … lacks generality. The main goal of this paper is to provide a comparison framework that overcomes these limitations. … Our evaluation shows that DL approaches are the best predictors, particularly the LSTM, and this is consistently true across all the cryptos examined.