Perspective on Risk = Jan. 17, 2023 (Technology & Productivity)

Background Trends in Productivity; Productivity & Innovation; The Major Revolutions I Have Seen In My Lifetime; Productivity and Demand?; Some Closing Tweets; Who Needs AI (Just Count on the Pigeons)

The elephant in the room that not many people are talking about these days is the slowing of productivity. As Henry Mo pointed out to me in a conversation, this topic is being crowded out by near-term topics such as a focus on the Fed, recession risk, inflation.

I promised you a bit back that I would try and weigh in on how the changes we are seeing in technology will affect us and what does it mean, and here I want to link that with thinking about productivity.

GDP growth depends on changes in population (births, deaths, immigration, perhaps years productively working) and productivity. Changes in the standard of living depends on productivity, degree of capital deepening, and the distribution of returns to labor and capital.

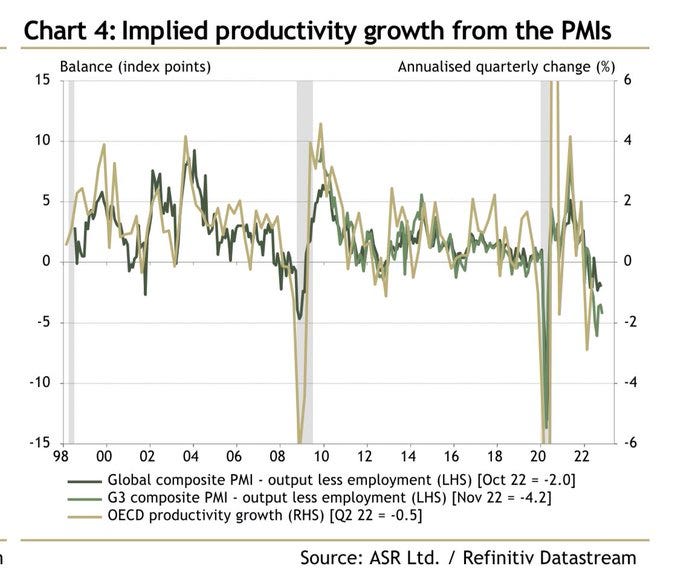

Background Trends in Productivity

Recently, the trend in US productivity has turned down (slowed). Productivity in the U.S. has dropped significantly in recent years, with the first three quarters of 2022 showing year-over-year drops in average productivity per worker. This is the first time since 1983 that this has occurred.

Eli Dourado has tweeted out two useful charts. What these charts show is that since 2010 half of industries have seen a decline in total factor productivity.

There are generally two camps on why productivity has dropped.

Larry Summers and Joseph Stiglitz argue that the weak demand for goods and services is leading to a lack of investment in both physical and human capital. This lack of investment, in turn, is slowing productivity growth.

Robert Gordon and Tyler Cowen argue that late 19th and early 20th centuries was characterized by a rapid pace of technological innovation, but that the pace has slowed significantly in recent decades.

Paul Krugman has feet in both camps

I’m interested in examining the Gordon/Cowen argument.

Productivity & Innovation

Ethan Mollick of UPenn has published an insightful substack on this topic: And the great gears begin to turn again... (One Useful Thing); definitely worth reading.

The two engines of economic growth — productivity and innovation — have been mysteriously grinding to halt in recent decades, with long-term consequences for all of us.

a recent, convincing, and depressing paper found that the pace of invention is dropping in every field, from agriculture to cancer research. More researchers are required to advance the state of the art. In fact, the speed of innovation appears to be dropping by 50% every 13 years.

Are Ideas Getting Harder to Find?

We present evidence from various industries, products, and firms showing that research effort is rising substantially while research productivity is declining sharply. A good example is Moore’s Law. The number of researchers required today to achieve the famous doubling of computer chip density is more than 18 times larger than the number required in the early 1970s. More generally, everywhere we look we find that ideas, and the exponential growth they imply, are getting harder to find.

This paper finds that a reason, again, is the burden of knowledge: the nature of science is growing so complex that PhD founders now need large teams and administrative support to make progress, so they go to big firms instead!

Declining Business Dynamism among Our Best Opportunities: The Role of the Burden of Knowledge

We document that since 1997, the rate of startup formation has precipitously declined for firms operated by U.S. PhD recipients in science and engineering. These are supposedly the source of some of our best new technological and business opportunities. We link this to an increasing burden of knowledge by documenting a long-term earnings decline by founders, especially less experienced founders, greater work complexity in R&D, and more administrative work. The results suggest that established firms are better positioned to cope with the increasing burden of knowledge, in particular through the design of knowledge hierarchies, explaining why new firm entry has declined for high-tech, high-opportunity startups.

Thus, we have the paradox of our Golden Age of science, as illustrated in this paper. More research is being published by more scientists than ever, but the result is actually slowing progress! With too much to read and absorb, papers in more crowded fields are citing new work less, and canonizing highly-cited articles more.

Slowed canonical progress in large fields of science

The size of scientific fields may impede the rise of new ideas. Examining 1.8 billion citations among 90 million papers across 241 subjects, we find a deluge of papers does not lead to turnover of central ideas in a field, but rather to ossification of canon. Scholars in fields where many papers are published annually face difficulty getting published, read, and cited unless their work references already widely cited articles. New papers containing potentially important contributions cannot garner field-wide attention through gradual processes of diffusion. These findings suggest fundamental progress may be stymied if quantitative growth of scientific endeavors—in number of scientists, institutes, and papers—is not balanced by structures fostering disruptive scholarship and focusing attention on novel ideas.

A new paper has successfully demonstrated that it is possible to correctly determine the most promising directions in science by analyzing past papers with AI, ideally combining human filtering with the AI software. And other work has found that AI shows considerable promise in performing literature reviews.

A tool that could suggest new personalized research directions and ideas by taking insights from the scientific literature could significantly accelerate the progress of science. A field that might benefit from such an approach is artificial intelligence (AI) research, where the number of scientific publications has been growing exponentially over the last years, making it challenging for human researchers to keep track of the progress. Here, we use AI techniques to predict the future research directions of AI itself. We develop a new graph-based benchmark based on real-world data – the Science4Cast benchmark, which aims to predict the future state of an evolving semantic network of AI. For that, we use more than 100,000 research papers and build up a knowledge network with more than 64,000 concept nodes. We then present ten diverse methods to tackle this task, ranging from pure statistical to pure learning methods. Surprisingly, the most powerful methods use a carefully curated set of network features, rather than an end-to-end AI approach. It indicates a great potential that can be unleashed for purely ML approaches without human knowledge. Ultimately, better predictions of new future research directions will be a crucial component of more advanced research suggestion tools.

The Major Revolutions I Have Seen In My Lifetime

Tyler Cowen posted The major revolutions I have seen in my lifetime on his Marginal Revolution blog.

I like how he brings things together simply.

The moon landing was one of my formative childhood memories,

I’ve written before on how the collapse of the Berlin Wall was a proxy event for realigning returns to capital and labor globally.

I hadn’t thought of feminization in these terms, but it clearly applies.

I remember the first time I truly understood the power of the internet. I was working as a Bank Examiner at the Fed and I received a call from Walter Zunic, an international examiner who frequently consulted with foreign bank supervisors to help them advance their supervision programs. He was in Argentina, and he had an emergency: could I get him all of our guidance on capital market activities ASAP. The typical way at the time would have been to ship hard copy manuals by airfreight; he’d get them in a week or so (even with FedEx). Instead I was able to create pdf files and send them by email!

We just passed the 16th anniversary of the iPhone. Hard to believe its only been 16 years. Who else remembers their Blackberries?

So I think he is correct in marking AI as a watershed. It’s not just LLMs, but the whole AI methodology (backprop + transformers + attention -> generative)1. It required the development of cloud computing2, and the phenomenal reduction in the cost of computing3, and the development of Generative AI.

Language and image recognition capabilities of AI systems are now comparable to those of humans. As Dr. Max Roser notes4:

Just 10 years ago, no machine could reliably provide language or image recognition at a human level.

Dr. Roser cites all of the places AI is already in control:

When you book a flight, it is often an artificial intelligence, and no longer a human, that decides what you pay. When you get to the airport, it is an AI system that monitors what you do at the airport. And once you are on the plane, an AI system assists the pilot in flying you to your destination.

AI systems also increasingly determine whether you get a loan, are eligible for welfare, or get hired for a particular job. Increasingly they help determine who gets released from jail.

Several governments are purchasing autonomous weapons systems for warfare, and some are using AI systems for surveillance and oppression.

AI systems help to program the software you use and translate the texts you read. Virtual assistants, operated by speech recognition, have entered many households over the last decade. Now self-driving cars are becoming a reality.

In the last few years, AI systems helped to make progress on some of the hardest problems in science.

Large AIs called recommender systems determine what you see on social media, which products are shown to you in online shops, and what gets recommended to you on YouTube. Increasingly they are not just recommending the media we consume, but based on their capacity to generate images and texts, they are also creating the media we consume

Another aspect of the argument is that the nature of scientific advancement has changed, and that innovations now are more incremental and less ‘disruptive.’ The journal Nature has very recently published Papers and patents are becoming less disruptive over time.

Recent decades have witnessed exponential growth in the volume of new scientific and technological knowledge, thereby creating conditions that should be ripe for major advances. Yet contrary to this view, studies suggest that progress is slowing in several major fields.

We find that papers and patents are increasingly less likely to break with the past in ways that push science and technology in new directions. This pattern holds universally across fields and is robust across multiple different citation- and text-based metrics. … Overall, our results suggest that slowing rates of disruption may reflect a fundamental shift in the nature of science and technology.

One hypothesis is that

as scientific fields have developed, it takes longer and longer for new experts to learn all they need to make important discoveries.

So from my perspective, I think it’s pretty crazy to think that we have hit an innovation plateau. What we are seeing is that technology is replacing labor, and working its way up the value chain.

Productivity and Demand?

All the way back to Tom Friedman’s seminal 2005 book The World is Flat, the subtitle of which was ‘A brief history of the twenty-first century.’ He argued that there was fungible and non-fungible work; fungible work is being displaced by technology. He also argued for the emergence of ‘super-empowered’ individuals - individuals who can harness the disruptive empowering technologies. ChatGPT and other LLMs certainly seem like an empowering tool. These two factors in turn, along with globalization, has driven increases in returns to education, driving income and wealth inequality.

How has productivity affected the distribution of wages?

Wages have been spreading out across workers over time – or in other words, the 90th/50th wage ratio has risen over time. A key question is, has the productivity distribution also spread out across worker skill levels over time? Using our calculations of productivity by skill level for the U.S., we show that the distributions of both wages and productivity have spread out over time, as the right tail lengthens for both. We add OECD countries, showing that the wage-productivity correlation exists, such that gains in aggregate productivity, or GDP per person, have resulted in higher wages for workers at the top and bottom of the wage distribution. However, across countries, those workers in the upper income ranks have seen their wages rise the most over time. The most likely international factor explaining these wage increases is the skill-biased technological change of the digital revolution. The new AI revolution that has just begun seems to be having a similar skill-biased effects on wages. But this current AI, called “supervised learning,” is relatively similar to past technological change. The AI of the distant future will be “unsupervised learning,” and it could eventually have an effect on the jobs of the most highly skilled.

As income increasingly is distributed towards the higher-skilled, upper-income worker, one would expect the marginal propensity to consume to decrease.

So perhaps (hypothesis) this is the answer: the gains to technology are not equally distributed. Yes, some high-skilled workers are becoming more empowered, but perhaps a larger portion of the mid-distribution population are being displaced and are employed in lower-productivity (service?) jobs, resulting in an aggregate decrease in consumption due to returns accruing to those with a lower marginal propensity to consume?

Anyway, not sure that I have the answer, and I certainly don’t think I’m smarter than the collective Summers/Stiglitz/Gorton/Cowen crew.

Some Closing Tweets

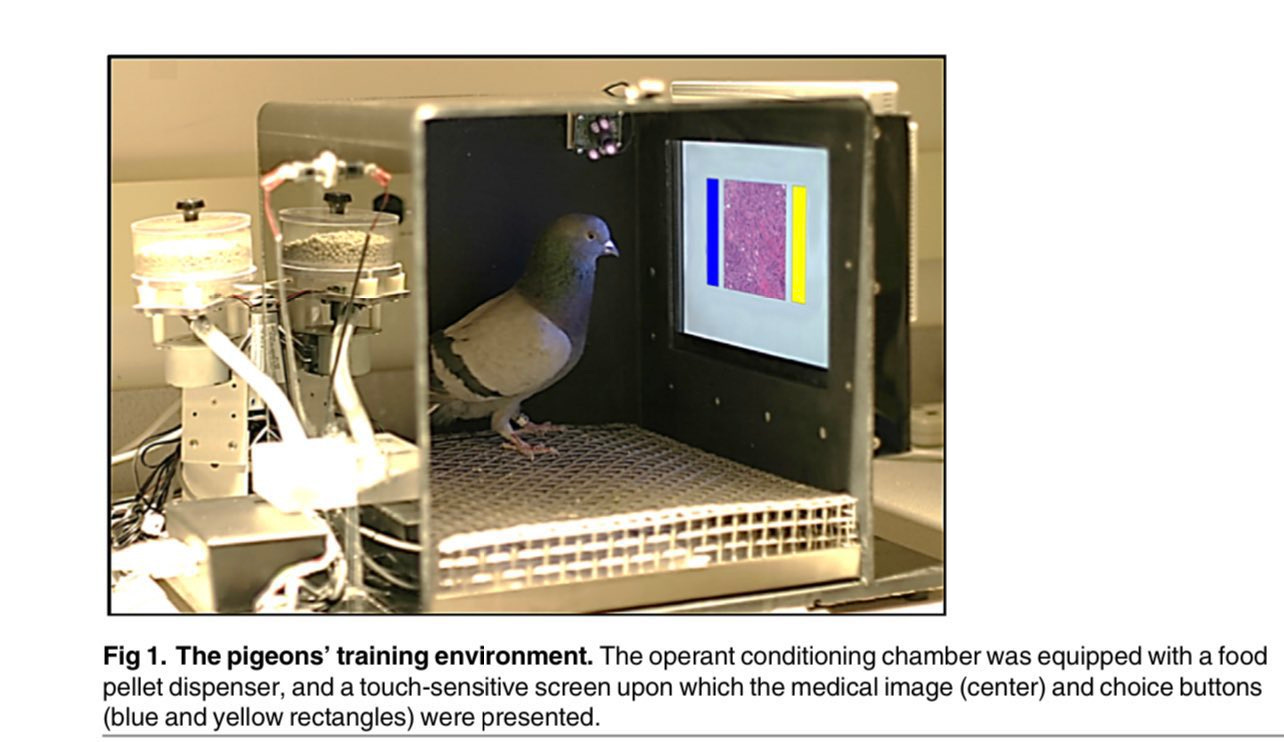

Who Needs AI (Or Maybe Just Count on the Pigeons)

Pigeons (Columba livia) as Trainable Observers of Pathology and Radiology Breast Cancer Images

Pathologists and radiologists spend years acquiring and refining their medically essential visual skills.

We report here that pigeons (Columba livia) … can serve as promising surrogate observers of medical images, a capability not previously documented. The birds proved to have a remarkable ability to distinguish benign from malignant human breast histopathology after training with differential food reinforcement; even more importantly, the pigeons were able to generalize what they had learned when confronted with novel image sets.

The formal name of ChatGPT is Generative Pre-trained Transformer (ChatGPT)

CPT3 was trained on 314 petaflops of computing; Minerva, the current state-of-the-art which can solve complex mathematical problems at a college level, was trained on 2.2 billion petaflops.

It is reported that training ChatGPT cost OpenAI $12 million for a single training run